Training an algorithm to understand cultural context in visual data is a complex but increasingly essential task in the field of artificial intelligence. As visual recognition systems are deployed globally, from facial recognition to content moderation and recommendation engines, understanding cultural context becomes crucial to ensure accuracy, fairness, and relevance. Here’s how researchers and developers approach this challenge.

Understanding the Problem

At its core, visual data interpretation involves recognizing patterns—shapes, colors, objects, and scenes. However, visual cues carry different meanings across cultures. A gesture considered polite in one country might be offensive in another. Traditional clothing, religious symbols, or even food presentations can vary dramatically in interpretation based on cultural context. If an AI model is trained primarily on data from a single cultural viewpoint, it risks making incorrect or even harmful assumptions.

Step 1: Diverse and Representative Datasets

The first step in training any algorithm to grasp cultural context is curating a dataset that includes a wide array of cultural representations. This means collecting images from various geographic locations, ethnic backgrounds, socioeconomic settings, and historical contexts. Data must be annotated not just with what is seen (a hand gesture, a dress, a street scene) but what it means in that cultural setting.

Crowdsourced annotations can be useful here, especially when using contributors from different cultural backgrounds to label the same data. This helps capture subjective cultural interpretations rather than just objective features.

Step 2: Cultural Metadata and Contextual Tags

To enrich machine understanding, visual data must be paired with metadata. This could include geographic location, language, historical references, and socio-political information. For example, an image of a street protest in France might have a different cultural meaning than a similar image in Hong Kong or Tehran. By tagging images with these contextual cues, algorithms can learn to associate visual elements with specific cultural environments.

Knowledge graphs and semantic ontologies can also assist. These structures help the algorithm relate objects, actions, and settings to broader cultural narratives and meanings.

Step 3: Multimodal Learning Approaches

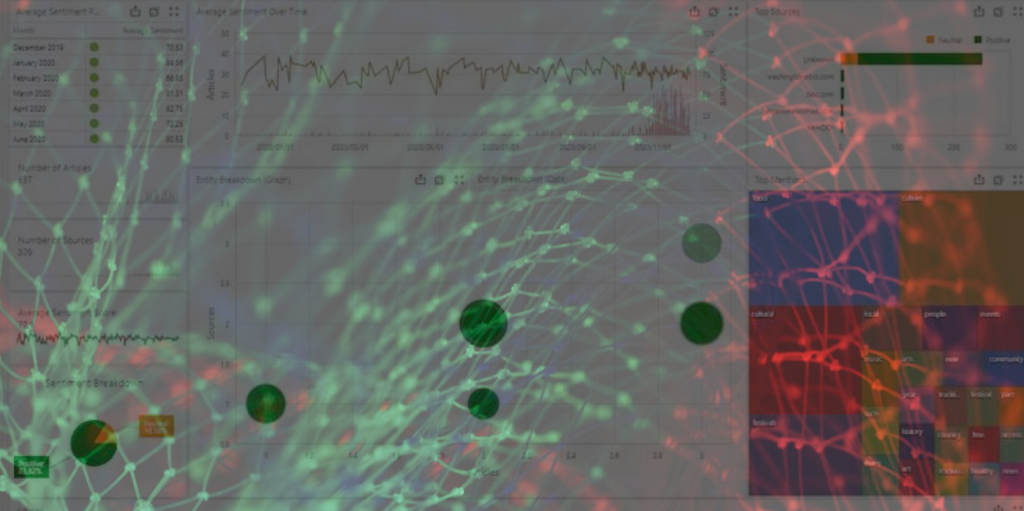

Training a model solely on visual data is rarely enough. Multimodal learning—where an AI system is trained on multiple types of data such as images, text, and audio—can improve contextual understanding. For instance, pairing a photo of a traditional festival with descriptive text in the local language helps the algorithm understand not just the visual elements, but their cultural significance.

Natural language processing (NLP) models trained on culturally diverse corpora can be used alongside visual models to interpret cultural context more effectively.

Step 4: Human-in-the-Loop Feedback

AI systems benefit significantly from human feedback, especially in interpreting nuance. Including humans in the training and evaluation loops ensures the model isn’t just “guessing” based on data patterns but learning from real-world interpretations. This iterative feedback process helps refine the algorithm’s understanding of culturally sensitive or complex visuals.

Bias detection and correction frameworks are also important. Regular audits by culturally diverse teams can reveal blind spots in the model’s learning and help correct them before deployment.

Step 5: Continuous Learning and Adaptation

Cultural norms evolve over time. What was acceptable or typical a decade ago might now be outdated or offensive. To keep pace, AI models must be designed for continuous learning, incorporating new data and feedback as societal values shift. This is especially true in global applications, where localization is not just about language, but also visual semantics.

A computer vision software platform designed with modular, updatable components allows developers to feed in new cultural data and adjust interpretations accordingly without retraining the entire model from scratch.

Conclusion

Training an algorithm to understand cultural context in visual data is a multidimensional challenge that blends technology, anthropology, and ethics. Through diverse datasets, metadata enrichment, multimodal learning, human feedback, and continuous adaptation, AI systems can be better equipped to navigate the rich tapestry of global cultures. As we move toward more inclusive and responsible AI, embedding cultural awareness into visual recognition models is not just a technical goal—it’s a societal imperative.